ChatGPT has taken the business world by storm. Many consider it to be the breakthrough AI application that will push automation to new levels – not just on the factory floor but in the front office and beyond.

But even though adoption is moving forward at a rapid pace, a full understanding of what ChatGPT is and how it works is sorely lacking, particularly by those who are expected to use it to make themselves more productive.

Here, Techopedia explains the basics one needs to know before implementing this AI tool in critical business applications.

Here’s How ChatGPT Works

ChatGPT’s Features

The key component of any AI model is the algorithm. This provides the basic processes and sets of rules to solve a problem within a mathematical framework.

ChatGPT uses many of the same algorithmic elements found in other AI models.

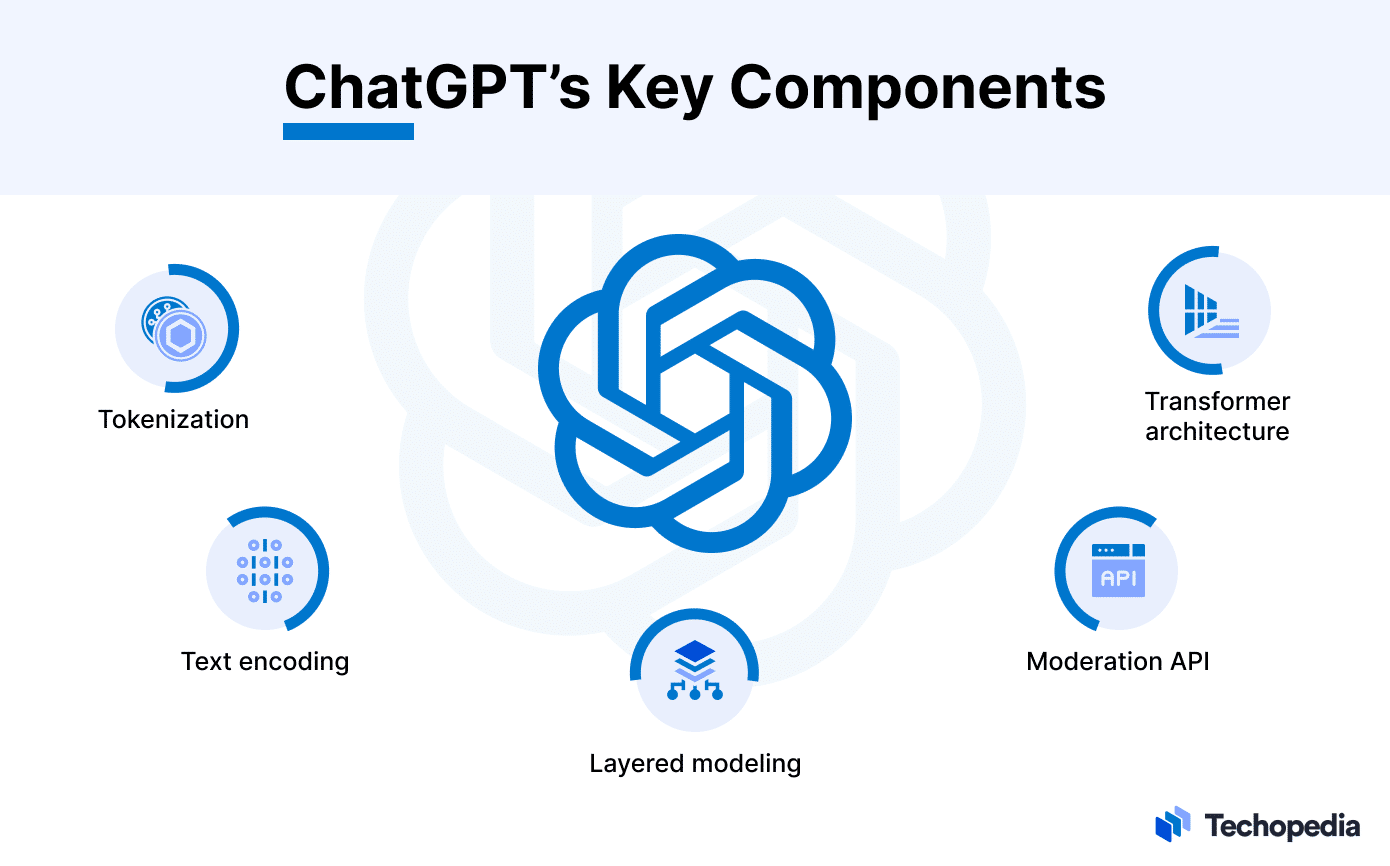

Among them:

- Tokenization: A means to break down text into smaller, more manageable components.

- Text Encoding: Assigning a numerical value to tokens for easier processing.

- Layered Modeling: Layers of multiple, interconnected processes designed to provide weight and significance to each output.

- Moderation API: A filter for inappropriate content.

- Transformer Architecture: A type of neural networking designed to understand and generate text more easily.

How Does It Learn?

ChatGPT is based on a form of the large language model (LLM), a type of machine learning (ML) that specializes in absorbing vast amounts of language data to produce outputs that are similar to the data it has ingested.

This provides a level of natural language processing (NLP) that closely mimics the styles and patterns of human speech rather than the stilted, robotic responses of earlier chatbots.

A key element in ChatGPT’s design is the way it incorporates supervised and reinforcement learning during the training process. Supervised learning relies on the careful adjustment of labeled and classified data in order to produce an appropriate outcome, usually a prescribed prediction, action, or response.

Reinforcement learning (RL) uses rewards and other incentives to guide AI into making proper decisions, much like the way performance or service animals are given a treat when they behave correctly.

ChatGPT uses a blended form of supervised and reinforcement learning called Reinforcement Learning from Human Feedback (RLHF), which can also be found in numerous generative AI (GAI) models from Google, Meta, and others.

The idea behind RLHF is to develop an AI that not only predicts the right word during any given exchange but to gauges the response preferred by its interlocutor.

This is easier said than done, of course. It typically requires ingestion of voluminous datasets describing preferred responses in a wide range of circumstances, as well as creating feedback loops for both correct and incorrect outputs and setting the rewards for proper behavior.

Who Created ChatGPT?

ChatGPT was created by OpenAI, a research and development firm that specializes in GAI that, in their words, is “generally smarter than humans” but will benefit all of humanity nonetheless.

The tool evolved alongside other GAI models, such as InstructGPT, to interact with humans in a conversational way. This means it can engage in conversation, ask relevant questions, and even admit mistakes, all with the intent to arrive at an appropriate conclusion.

The Future of ChatGPT: What’s Next?

OpenAI recently launched ChatGPT Enterprise, which offers greater security and privacy, as well as the high-speed performance of the GPT-4 model released last May. It also provides more advanced data analysis capabilities and is more customizable.

ChatGPT-5 is currently expected to launch by the end of 2025 and should have a greater capacity to understand subtle turns of phrase as well as emotional content, making it even more life-like.

Beware of the Drawbacks

Since the model is designed to provide the responses that it thinks its questioner wants to hear, the most significant issue is that it won’t necessarily give the correct answer.

This is why organizations should tread carefully when using it to make market predictions or provide an appropriate course of action. Indeed, simply posing queries using different syntax can often lead to disparate, even contradictory, responses.

Another issue with ChatGPT is that it has a knowledge cut-off date of September 2021, which means any response will be based on data that is at least two years old.

The intent of this was to prevent it from using sources or data that may be inaccurate or subject to change, but the practical effect is that its ability to assess current environments is severely limited.